Hi,

I have been trying for a while now, and I never managed to make my system compensate the voltage drop in the DC part of my system.

System is composed of:

2x MPPT 250/60 ver. 1.37

Venus device, ver. 2.23

Multiplus 3000/24/70 ver. 423

Battery bank 24V 14kwh OPzS

Section cables of 35 and 50mm2, lenghts of around 5m on MPPT to battery, 2m between battery and Inverter.

2 fuses involved along the way

Voltage drop needed to compensate in high currents: around 0.3 to 0.7V, at most.

Without DVCC, I see correct voltages monitored in both the MPPTs and the Multiplus. The Multiplus is closer to battery and with the Voltage Sensor correctly connected (confimed with multimeter).

In absorption stage, there is some voltage missing to be optimal value, and slowly rises when current drops. Only in final moments before going to float I see the voltage reach the expected 28.8V + temperature compensation.

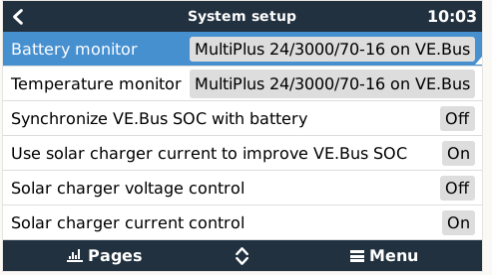

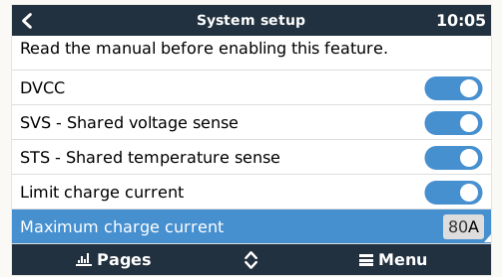

So, I enabled DVCC + SVS, and what I see is that instead of the MPPTs rising a little the output voltage, nothing changes on system, and he only difference is that now the Multiplus reports the voltage of the MPPT instead of its own!!! Battery monitor selected is the Multiplus!

Yesterday, I switched off DVCC and added temporarily the Smart Battery Sensor, just to test. The system started to respond as expected and the MPPT immediately raised their output voltage in order to have true 28.8 + temperature compensation on the battery terminals. But I would rather use DVCC, since I have Venus device.

Any ideas of what is going on?

Thanks and regards